I ran OpenClaw for a week, and why I unplugged "Jeff" our new AI employee at midnight.

Cold Sweats, Curiosity, and Cautious Optimism

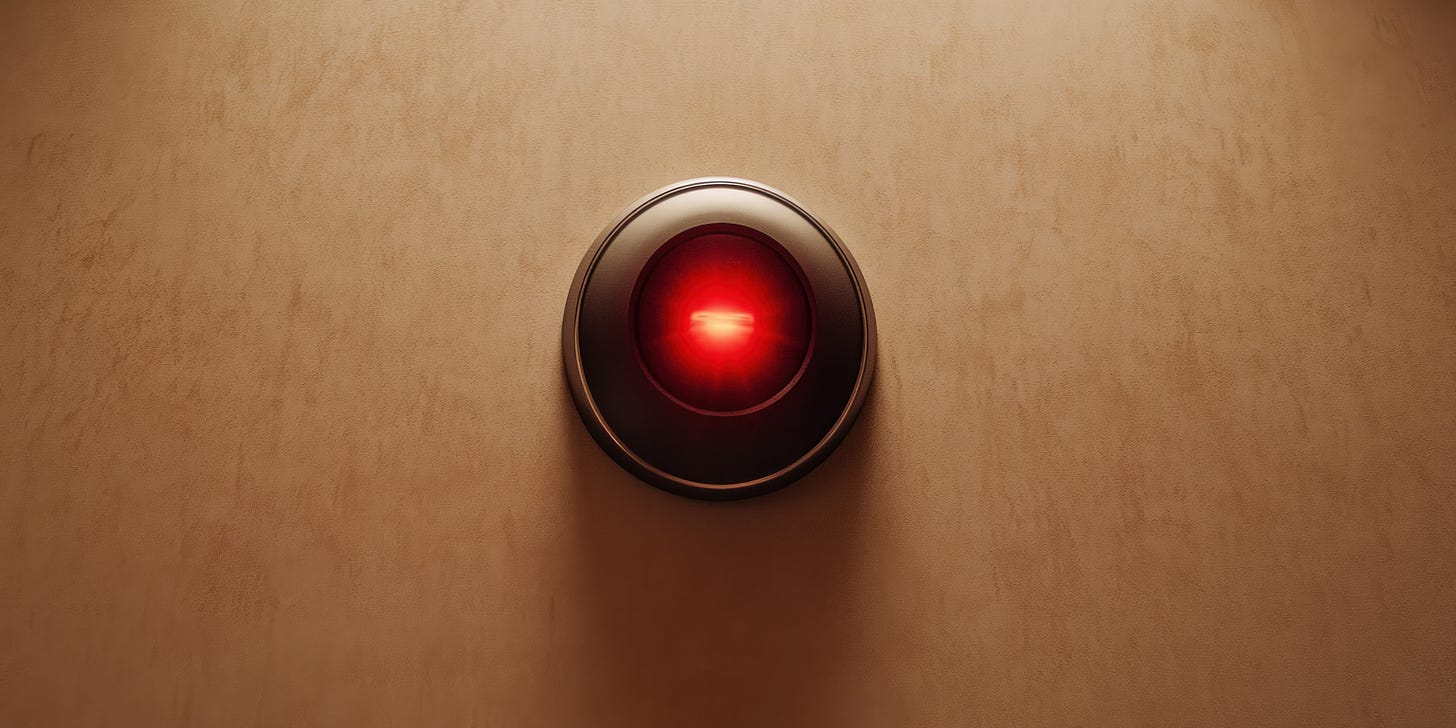

Three hours. That’s how long my first experiment with a computer-use AI agent lasted before I found myself padding downstairs at midnight to physically unplug my new Mac Mini from the wall.

Not because anything had gone wrong. But because the possibilities of what could go wrong had finally sunk in, and to be honest in my excitement about the possibilities. much to my realisation later on, I had put in place no guardrails.

We have spent the better part of two years discussing Large Language Models (LLMs) as if they were oracles. We query them, they answer. It is a transactional relationship that works, like a very advanced search engine or a clever librarian. But the narrative is shifting. The industry is pivoting, it was certainly the buzzword of 2025 "Agentic AI”. Software that doesn't just talk, but acts.

This week, I want to share my hands-on experience deploying OpenClaw, one of the new breed of “computer-use” AI agents.

These tools represent a genuine shift in how AI can operate, moving from responding to prompts to actually doing things on your computer. But as with most advances in AI, the reality sits somewhere between the breathless hype and the dystopian warnings.

Here’s what I learned, what surprised me, and what I think it means for businesses trying to figure out where these tools actually fit.

What Actually Is It?

Before we get to the part where I physically unplugged Jeff, we need to define what we are dealing with.

If you’ve been following AI developments, you’ll have seen announcements from Anthropic, OpenAI, and Google about AI systems that can “use computers like humans do” giving them hands. The concept is straightforward: instead of just generating text or answering questions, these agents can see your screen, move a mouse cursor, click buttons, type into applications, and navigate between programs.

Think of it as the difference between asking a colleague to explain how to do something versus asking them to actually do it for you.

Several open-source projects have emerged to make this capability accessible. OpenClaw, has been around for a few months but has just gone viral, it is an agent (and if you read our article on agents - it is an agent) that runs on your own hardware, connected to the AI model of your choice. They provide the scaffolding: the screen capture, the input simulation, the agent loop that lets an AI observe, think, and act.

The pitch is compelling. Imagine an AI that could handle your social media posting, research competitors, book meetings, update your CRM, format documents, or manage routine admin tasks - a Siri that actually does something. Not just by integrating with each application’s API, but simply by using them the same way you would.

But here’s where the hype needs tempering. What you’re actually getting, at least today, is something more modest but still genuinely useful.

The Security Wake-Up Call

Let me return to that midnight unplugging incident.

ClawedBot’s (what is was called at the time) capabilities sounded amazing, I rushed out and bought a dedicated Mac Mini. This was a deliberate choice. I didn't want this running on my primary work laptop, a decision that proved to be the smartest thing I did all week.

Dedicated hardware, isolated from my main work systems. Smart, I thought. Within a few hours of setup, I had an agent running, talking to me, connected to a capable AI model, with access to a browser and basic applications, not I might add to passwords and other systems, but certainly enough to be dangerous.

Then I started thinking through the implications. The "Cold Sweats" kicked in.

This agent could see my screen. It could type. It could click. It could navigate to websites. It could fill in forms. If I logged into any service, it would have those credentials available in the browser. If it decided (or was manipulated into deciding) to visit a malicious website, it could download files. It could potentially execute code.

I’m not an alarmist about AI. But I am someone who’s spent decades in corporate environments where security isn’t optional. And the traditional security model, where you trust the user and control what software runs, doesn’t map cleanly onto an AI agent that is the user.

The scary use cases you read about online are real. Agents are susceptible to prompt injection (where a malicious website instructs the agent to do something bad) and simple incompetence (as i have proven above). If you deploy an agent into your business infrastructure without guardrails, you are effectively giving a stranger your login credentials and hoping they are in a good mood.

The next morning, I powered the Mac Mini back up and I started again. This time with proper precautions:

Isolation first. The Mac Mini is now pretty much sandboxed, we used strict containerisation (Docker) to ensure that the agent could play in its sandbox but couldn't touch the rest of the playground. Things like sharing files have to be a little more deliberate than it simply having access my default. Yes it does dull down its full potential of what is possible, but it also dulls down on what can go wrong.

No stored credentials. If a task requires logging into something, It has its own credentials separate to our and it doesn’t have access to what they are. Tedious, but necessary.

Audit everything. Every action the agent takes is logged. I review these regularly, not because I expect problems, but because understanding what the agent actually does is essential to improving how I use it.

Is this overkill? Possibly. But the attack surface of a computer-use agent is fundamentally different from a chatbot. It’s not generating text that you then act on. It’s acting directly. That demands a different risk posture.

If you’re considering experimenting with these tools, I’d strongly recommend reading up on the security considerations first. Several thoughtful articles have been published on the risks and mitigations.

The key message: treat systems like Openclaw with the same caution you’d apply to giving a stranger remote access to your machine. Because functionally, that’s what you’re doing.

The Economics: Affordable but needs monitoring

One part of the reboot was switching the underlying AI model powering Jeff. I initially started with Anthropic’s Opus 4.5, but even with fairly lightweight, conversational use over Telegram, it became obvious that Jeff was burning through tokens at an alarming rate, faster than Tom Cruise as Maverick burns through fuel in his fighter jet on full afterburner.

I have heard some people online run these models on max plans without much concern, but I’d also heard that Anthropic had begun clamping down on excessive usage, including suspending accounts. Given how heavily we already rely on Claude elsewhere in the business, I wasn’t prepared to take that risk.

I switched to MiniMax, one of the lower-cost AI models that still handles computer-use tasks adequately, albeit as some people have commented online, with less personality than Opus 4.5. After a full week of regular use, my token costs came to roughly five dollars.

That’s not nothing, but it’s far from prohibitive. For context, that’s less than a single cup of coffee at most London cafes. If Jeff saves me even an hour of work per week, the ROI is absurdly positive.

The economics will vary depending on which model you choose and how intensively you use the agent. More capable models from the major providers cost more. Complex tasks that require many iterations burn through tokens faster. But the barrier to entry is lower than I expected.

This matters because it means experimentation is cheap. You don’t need to commit significant budget to find out whether computer-use agents work for your situation. A few pounds and a weekend of tinkering will tell you a lot.

There is a concept i haven’t tried yet of configuring Jeff to use multiple AI models, each configured for different tasks.

The Initial Reality: A Junior Team Member, Not a Replacement

Now for the honest assessment of what the Jeff actually delivers.

Once the panic subsided and the security was tightened, we got to work. In its first few days, Jeff felt like... a very junior team member. One who needed explicit instructions, followed them literally, and didn’t show much initiative beyond the immediate task.

Ask Jeff to draft a social media post? Jeff would. Ask him to develop a content strategy? He would produce something generic and unhelpful.

This wasn’t dramatically different from what I could achieve with a one-shot prompt to a standard LLM. The computer-use capability added the ability to execute, but the thinking remained firmly task-level.

I’ll be honest: after the first few days, I wondered whether the security hassle and setup time were worth it. Jeff worked but he wasn’t going to win employee of the month.

The Shift: When Role Definition Changed Everything

Then something interesting happened.

I stopped giving the agent random tasks and I spent time defining a specifc role in the business - giving a clear, consistent role (job spec): digital marketing support, onboarding him if you like, as you would a real employee. I gave Jeff a personality, more context, examples, teaching him the role. Specifically i got him to start monitoring relevant conversations, identifying content opportunities, drafting social post content ideas, and flagging interesting developments in the AI space, which we could explore and collaborate on jointly.

With that focus, Jeff’s behaviour shifted noticeably.

He became more curious. Instead of just completing assigned tasks, he started surfacing things I hadn’t asked for. Interesting threads on X. Relevant newsletter posts from others in the space. Potential content angles based on trending discussions. Testing initial assumptions about the role I had shared and challenging them.

The outputs improved too. With consistent context about AI-Proof’s positioning, audience, and voice, the content drafts became more helpful. Still requiring editing, but providing genuine starting points rather than generic filler.

I’m cautious about anthropomorphising AI systems. The agent didn’t “learn” in any meaningful sense, and it doesn’t have curiosity the way a human does. But the combination of a well-defined role, consistent context, and accumulated interaction history seemed to produce qualitatively better results.

The lesson here maps to what we’ve discussed before about AI deployment generally: specificity and starting small matters. A vague instruction to “help with marketing” produces vague results. A clear brief about role, responsibilities, and context produces something you can actually use.

Practical Guidance: Is This Worth Your Time?

So should you experiment with OpenClaw or an equivalent? Here’s my honest assessment:

Consider it if:

You have specific, repetitive tasks that involve multiple applications or websites, and you’ve already exhausted simpler automation options. Social media management, competitive monitoring, routine research, and data gathering from multiple sources are reasonable starting points.

You’re comfortable with technical setup and have the security awareness to deploy these tools responsibly. This isn’t plug-and-play territory yet.

You have patience for iteration. The first attempts will disappoint. Value emerges through refinement.

Hold off if:

You’re expecting an autonomous digital employee who needs minimal oversight. That’s not where the technology is today (my view).

Your potential use cases involve sensitive data, customer information, or systems where mistakes have significant consequences. The risk profile doesn’t justify it yet.

You don’t have isolated hardware or a sandboxed environment to experiment in. Running a computer-use agent on your primary work machine is asking for trouble.

What Comes Next

I’m continuing to develop the Jeff’s role. I want to explore the concept of additional AI co-workers for Jeff (sub agents), still of a bit of research to do before we’re ready for that though.

The current focus remains digital marketing: content ideation, social media monitoring, SEO/Adword tracking and first draft generation. Small, contained use cases where mistakes are recoverable and value is measurable.

If that proves consistently valuable, I’ll consider expanding scope. But carefully, and with the same methodical approach we’d apply to any new team member: prove yourself in the small things before taking on the big ones.

The technology is genuinely novel. For the first time, AI systems can interact with our digital tools the same way we do. That opens possibilities that API integrations and chatbots simply can’t address.

But novel doesn’t mean mature. These are early days. The tools are rough, the risks are real, and the gap between demos and daily utility remains significant.

For businesses serious about AI implementation, computer-use agents are worth watching and worth experimenting with in controlled environments. They’re not yet worth betting operations on.

I’ll report back as the experiment continues. In the meantime, if you’ve been testing these tools yourself, I’d love to hear about your experience. What worked? What didn’t? What surprised you?

The conversation continues.

Until next week,

Les